How To Deploy AI Fast While Maintaining Zero Trust Security

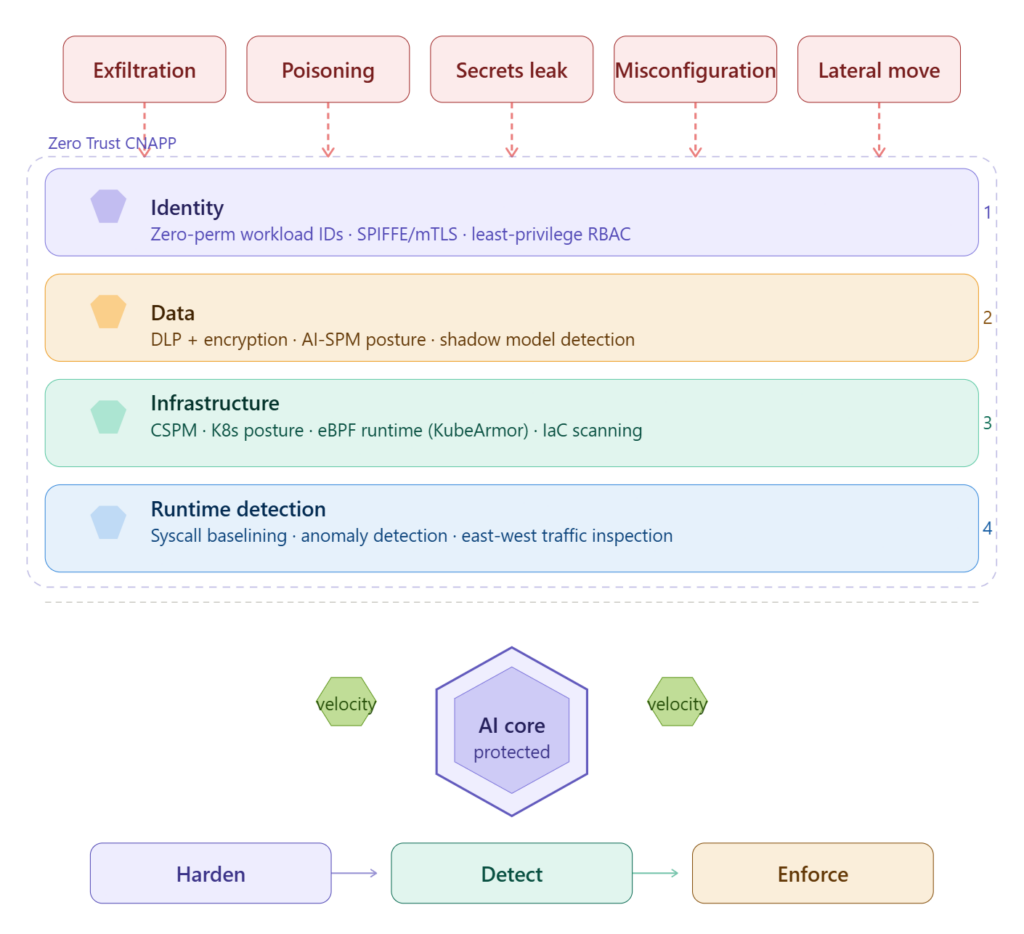

AI is now embedded across applications, cloud services, and Kubernetes-often faster than security programs can adapt. This guide explains how to keep delivery velocity while enforcing Zero Trust across identity, data, and runtime controls using AI-SPM and Zero Trust CNAPP principles.

Reading Time: 12 minutes

TL;DR

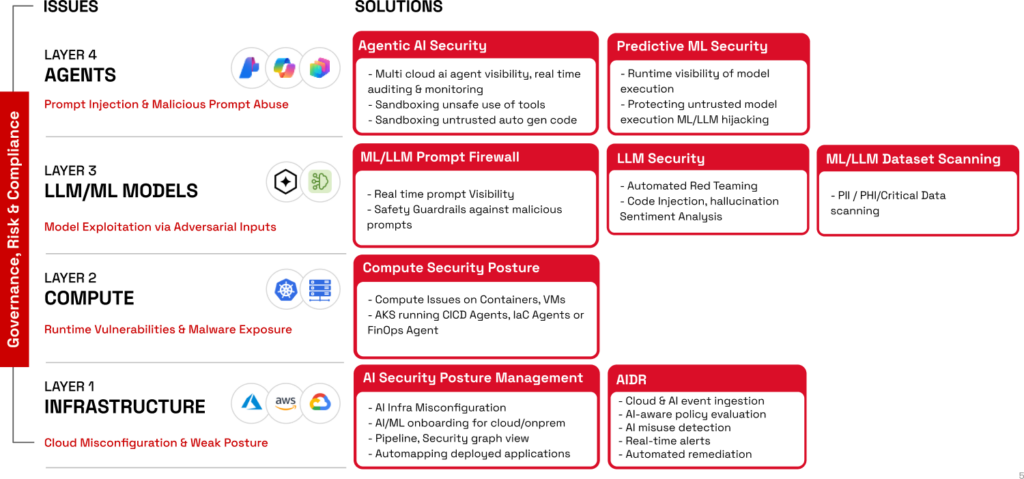

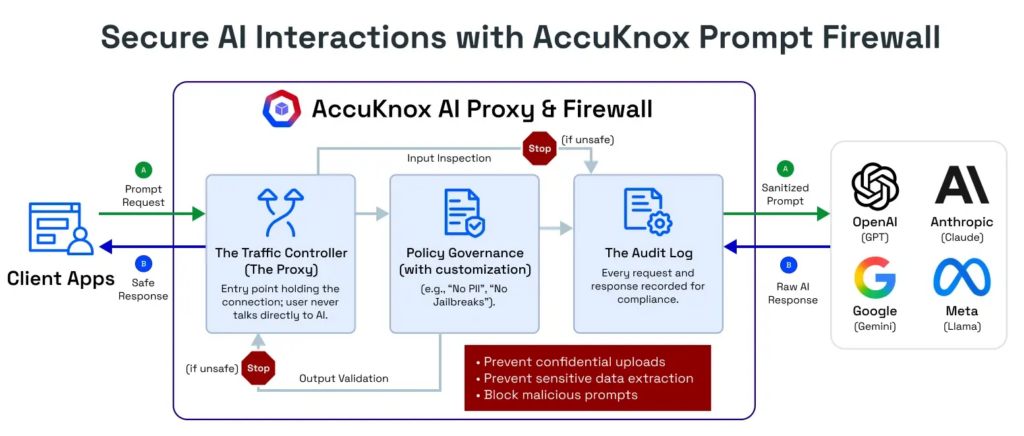

- Reality: AI is already in production across LLMs, agents, and analytics-so Zero Trust must extend to models, prompts, data, and pipelines, not just apps.

- Speed: “Deploying AI fast” means continuous delivery, rapid fine-tuning, and distributed stacks that demand automated guardrails, not manual reviews.

- Identity: Treat humans and non-human actors (agents, services, workloads) as first-class identities with least privilege and continuous verification.

- Runtime: Posture is necessary but insufficient-microsegmentation and runtime enforcement shrink blast radius when (not if) something breaks.

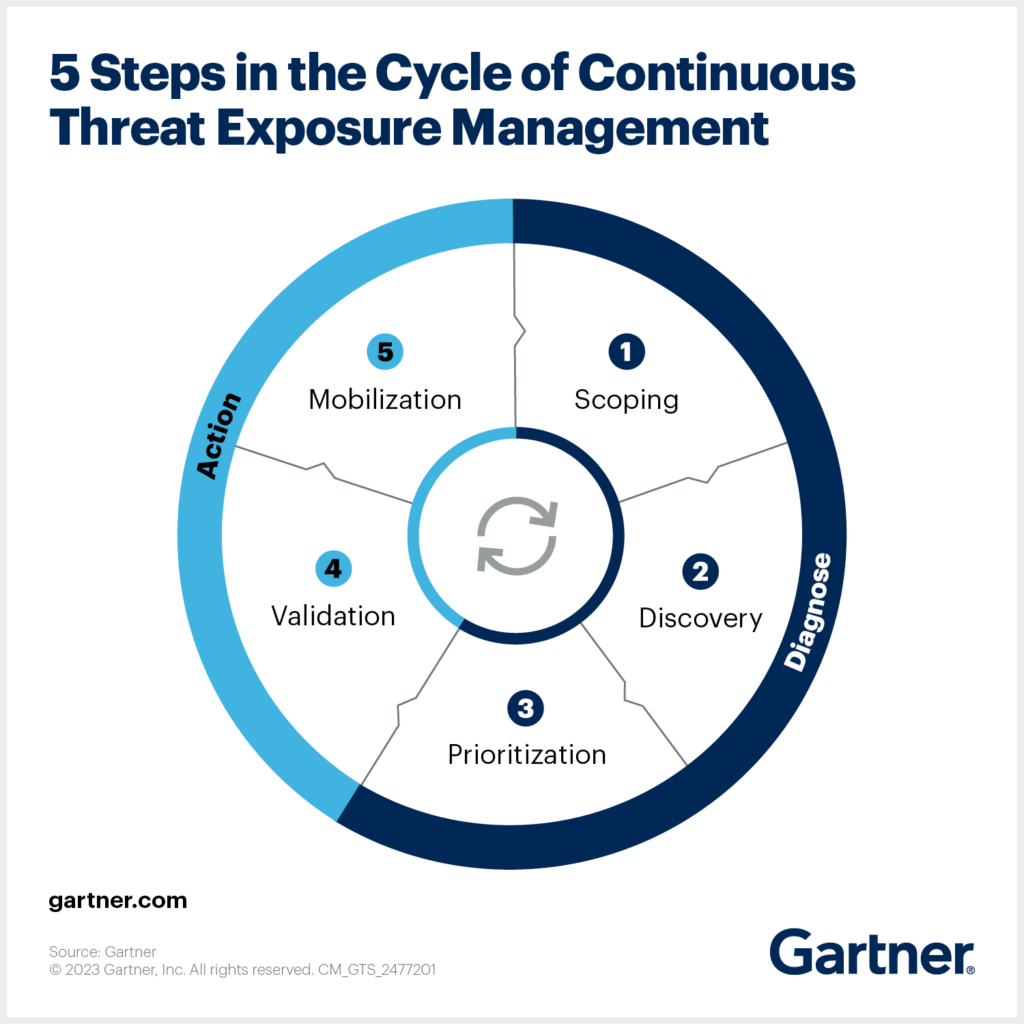

Blueprint: Start with AI inventory (AI-SPM), harden posture in CI/CD and cloud, then add AI-aware detection and continuous compliance with a unified Zero Trust CNAPP.

AI has become an integral part of operations in many industries, including finance, health care, manufacturing and automotive. It is no longer a question of whether this technology should be deployed — the primary question for business owners is how to deploy it first. As exciting as automation and innovation are, companies need to find a balance between the agility required for high-octane AI deployment and the rigorous demands of a zero-trust architecture.

Security processes are often viewed as restrictive, usually slowing down the implementation of innovative change in the name of safety and protocol. However, when a single compromised AI model can lead to catastrophic data exfiltration, these principles of cyber-vigilance are more necessary than ever.

By implementing carefully built security strategies around identity, data and infrastructure, companies can keep pace with a rapidly evolving landscape while safeguarding sensitive data. The failure mode is rarely a single exotic AI exploit. It is fast change plus fragile cloud and Kubernetes posture, over-permissioned identities, unmanaged secrets, and blind runtime behavior-exactly the conditions that turn small missteps into scalable incidents.That shows up as data exfiltration via model endpoints and agents, pipeline tampering (including data poisoning), secrets leakage, misconfigurations exposing notebooks or inference services, and lateral movement inside clusters. By the end of this post, you will be able to apply a phased blueprint that preserves AI velocity while enforcing Zero Trust across identity, data, runtime, and compliance-grounded in AI-SPM and a unified Zero Trust CNAPP platform approach.

Understanding the Core Concepts

Deploying AI fast is about the ability to test, update and scale models almost instantly. This is usually managed through MLOps and DevOps, which are frameworks designed for continuous delivery. With about 78% of organizations using AI in 2026 (McKinsey Global Survey), companies are now speeding through development phases, closing the gap between research and production as quickly as they can.

This level of speed can often clash with the core tenets of zero trust, defined by least privilege access, microsegmentation and continuous monitoring. The tension is especially accentuated when data access and model governance are brought into view, as large language models require significant amounts of data for fine-tuning.

Without a zero-trust framework, this constant data flow creates a large and unmonitored target for attackers. To fix this, security should be automated as part of the pipeline rather than handled manually at the end. By viewing solid security practices as the very foundation of operations, businesses can effectively close off potential entry points for cyberattacks.

Identity-Centric Security for AI

Identity is now the primary security boundary. To keep deployments moving quickly, many organizations use role-based access control to ensure that team members have access only to the information and data relevant to their specific tasks, preventing a single compromised account from granting access to the company’s entire database.

The most effective way to stop unauthorized movement through a network is by enforcing multi-factor authentication for every user, from developers to automated accounts managing servers. In 2026, this is often paired with privileged access management, which monitors the high-level accounts that control the AI infrastructure. When companies can automate these access checks, they can keep security in step with development.

Data-Centric Security

Data is the most valuable asset in the AI pipeline, making it a key target for modern cyber crimes. Achieving speed requires automated data discovery and classification tools that label sensitive data in real time before it enters a model. These classification tools then guide data loss prevention solutions to prevent the unauthorized exfiltration of sensitive training data of proprietary model weights.

When protecting data during the training phase, data masking and anonymization are essential. For example, differential privacy allows organizations to add noise to datasets, preserving the statistical structures needed to train AI while making it mathematically impossible to identify individual records.

This data-centric approach is crucial in the manufacturing sector, one of the world’s top targets for cyberattacks. As AI takes over predictive quality control, protecting the underlying proprietary data has become as important as the plant’s physical production capacity. Ensuring comprehensive data lineage and auditability is therefore essential for industrial companies to protect their intellectual property.

Infrastructure and Network Security

A zero-trust network assumes that an intruder has already bypassed the perimeter. A great strategy for ensuring a leak does not compromise all data is to break the network up into smaller and isolated zones.

This containment strategy, often called limiting the “blast radius,” is fundamental to zero trust. It ensures that a breach is not a catastrophe, but a manageable event. By assuming that any network segment could be compromised, companies can build a more resilient system where a single failure does not endanger the entire infrastructure.

If one segment is compromised, the others remain effectively locked away. For example, keeping the AI training environment completely separate from the production environment prevents sensitive customer information from being compromised in the event of a vulnerability.

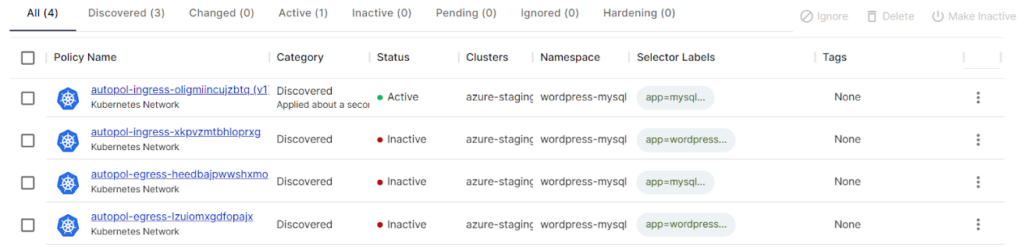

Because most modern workloads are containerized, container security is a key part of the infrastructure. This involves scanning every image for vulnerabilities before launch and using image signing to ensure only authorized code is running. For companies using multiple cloud providers, a cloud native application protection platform provides a single view for monitoring and enforcing policies across all clusters.

Additionally, using immutable infrastructure means that once something is deployed, it cannot be modified. If a patch is required, the old instance is deleted completely, and an updated version is launched in its place. This prevents a configuration drift, where small, undocumented changes compound and create hidden security gaps over time.

Continuous Monitoring and Threat Intelligence

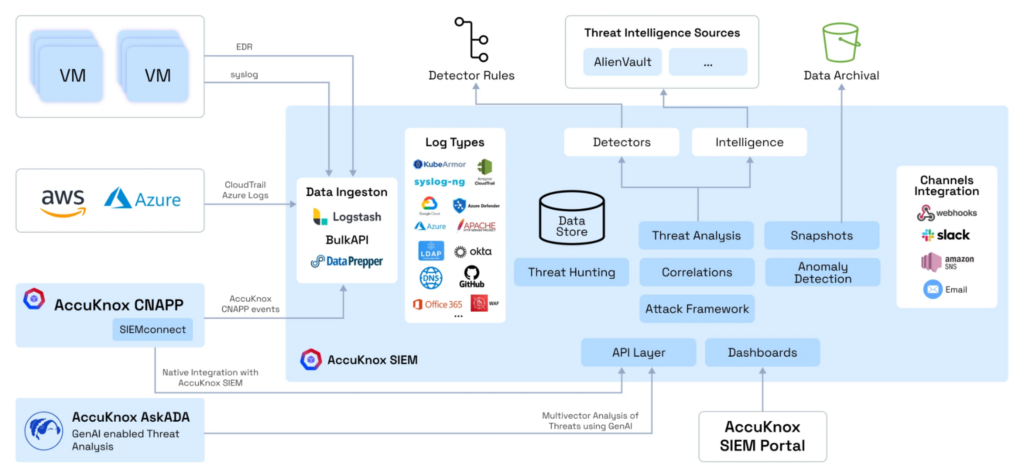

The final layer of defense lies in continuous, automated monitoring. All security logs and events are funneled into a security information and event management system for centralized analysis. However, because these environments generate large amounts of data, teams use user and entity behavior analytics to spot patterns that a human analyst might miss, such as an automated account suddenly attempting to access a database it has never accessed before.

Staying ahead of new threats requires integrating real-time threat intelligence feeds focused on emerging exploits. Regular scanning is also necessary to keep the underlying software and libraries up to date. To add a final layer of protection, organizations can secure cloud workloads at runtime.

This acts as a kernel-level enforcement layer that stops unauthorized file access the moment it starts, providing a safety net that moves fast. This proactive and perpetual approach makes sensitive data feel like a moving target, making the likelihood of any breach highly unlikely.

Identity Is The New Perimeter For Humans And AI Agents

AI delivery pipelines multiply identities. You have human developers and platform owners, but also workload identities for training jobs and inference services-and increasingly agents that call internal tools and APIs. Treating those non-human actors as “just another service account” is how least privilege quietly collapses.

- Separate roles by function: data engineering, ML engineering, platform ops, security, and production release.

- Use just-in-time elevation for high-risk actions such as model promotion, endpoint exposure, and secrets rotation.

- Continuously review Kubernetes RBAC and entitlements for namespaces that run AI workloads.

AccuKnox KIEM helps analyze Kubernetes RBAC and drive least-privilege recommendations for clusters that run AI workloads, so identity control is enforced as engineering reality-not an audit-time spreadsheet.

Data-Centric AI Security: Discovery, Classification, DLP, And Secrets

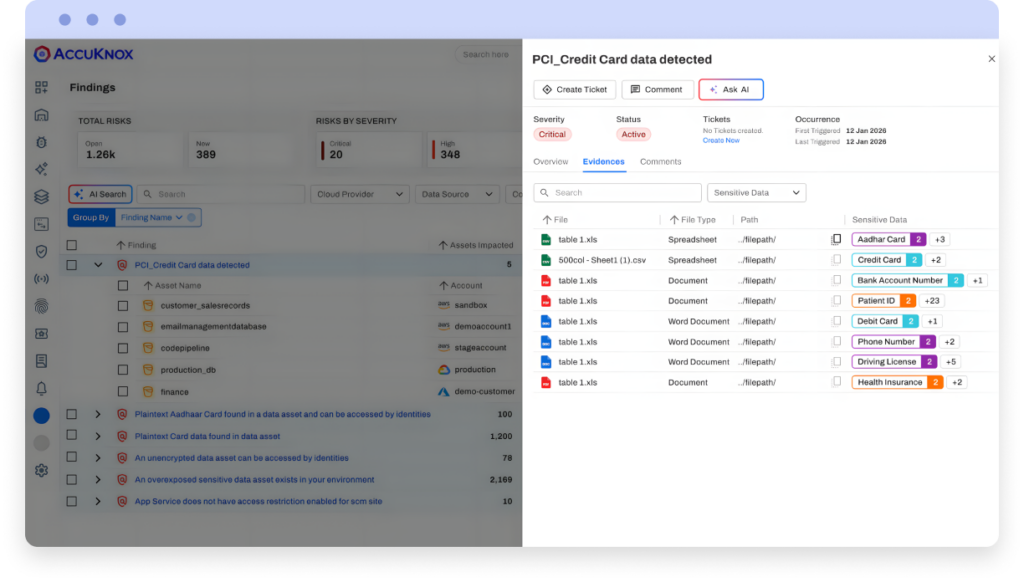

If identity is the control plane, data is the highest-leverage surface. Models learn from data. Inference can leak data. Agents retrieve data. And logs often capture sensitive prompts, outputs, and tokens as “debugging convenience.”

- Data discovery and classification: explicitly separate training data, evaluation data, and inference context sources, then bind them to access policies.

- DLP-style guardrails for model inputs and outputs where required, especially when LLMs touch regulated or customer-facing workflows.

- Masking and environment separation: non-production pipelines should not inherit production data access or long-lived credentials.

- Differential privacy as an option for specific high-sensitivity use cases, when product and governance requirements justify the tradeoffs.

- Secrets management and secret scanning across repos, images, and runtime-because AI stacks amplify the consequences of token and key leakage.

For regulated organizations, the requirement is not only “prevent leakage,” but “prove control.” That is where continuous compliance becomes operational: AccuKnox supports continuous compliance across 30+ frameworks for cloud and workload environments, enabling audit-ready evidence alongside enforcement.

Staying Ahead While Staying Safe With Zero Trust Security

Deploying AI fast while staying true to zero-trust security principles will soon become standard practice across industries. As automation has become a necessity, frameworks that address the cybersecurity risks it introduces will become equally essential. When companies approach security in layers and account for identity, data and constant monitoring, they can have the agility they need without the risk.

As the landscape becomes increasingly competitive, businesses that stay ahead of the curve will treat security as a fundamental component of the AI lifecycle rather than an afterthought. By ensuring that security serves as a foundation for operations, companies can innovate confidently and securely.

Infrastructure And Runtime Security For AI Workloads (CSPM, KSPM, CWPP)

Posture findings matter, but runtime is where exploitation and data movement actually happen. For AI workloads, the practical goal is to harden the stack end-to-end and then enforce expected behavior so compromise cannot “free roam” across the cluster or cloud estate.

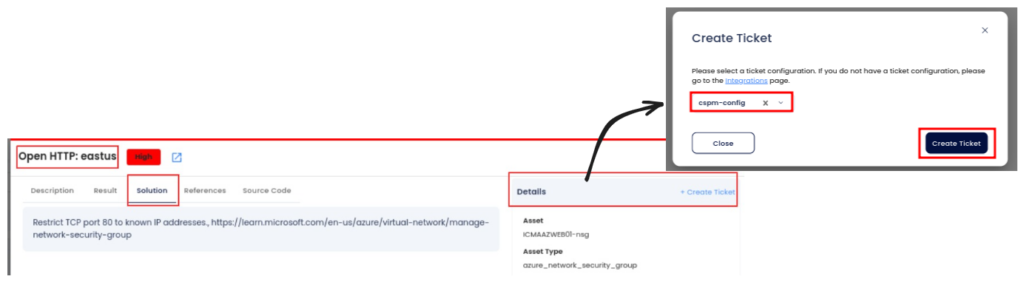

- CSPM: continuous misconfiguration and drift detection across multi-cloud dependencies that AI pipelines rely on (storage, networking, identities, and exposed services).

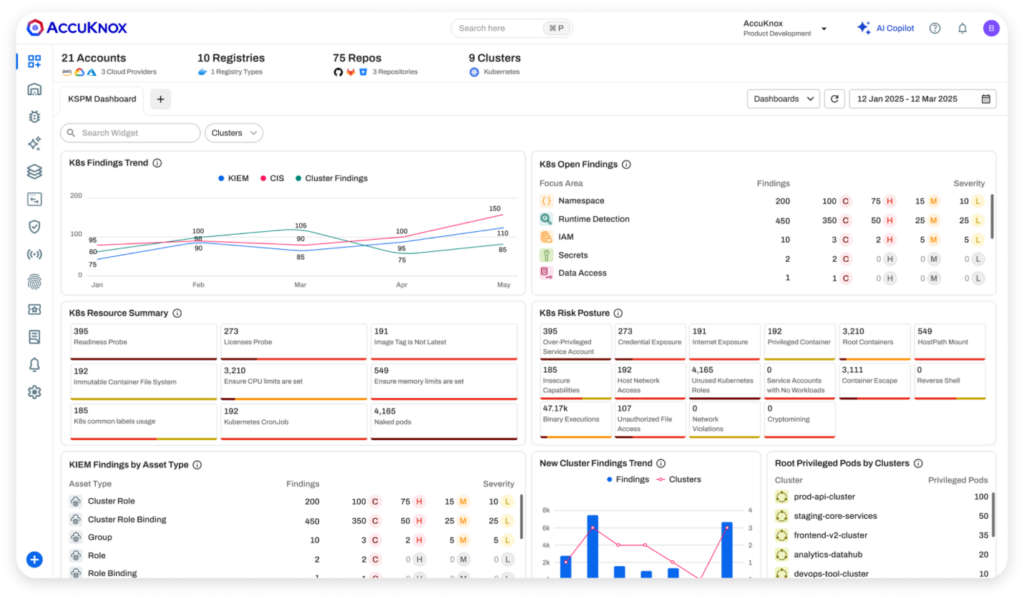

- KSPM: cluster hardening, RBAC review, and admission controls to stop risky configurations at deploy-time.

- CWPP/runtime security: behavior-based detection with inline prevention for workloads that run training and inference, enforced where the workload executes.

AccuKnox runtime security uses eBPF and Linux Security Modules (AppArmor/SELinux/BPF-LSM) via KubeArmor for inline mitigation and least-privilege policy enforcement. When paired with microsegmentation, runtime controls shrink blast radius by restricting what workloads can execute, read, and reach-by default.

| AI Surface | Common Failure Mode | Zero Trust Control | Where It Lives |

|---|---|---|---|

| Notebooks | Public exposure, overbroad permissions, embedded tokens | Role separation, least-privilege policies, secret scanning and rotation | Cloud + CI/CD |

| Data Stores / Feature Pipelines | Misconfigured storage, weak lineage and access boundaries | Classification + least privilege, DLP guardrails, drift detection | Cloud + Runtime |

| Model Registry / Artifacts | Uncontrolled promotion, weak integrity and provenance | Signed promotion workflows, least-privileged release roles, audit trails | CI/CD + GRC |

| CI/CD For ML | Unsafe images, leaked secrets, drift between build and deploy | Policy-as-code gates (IaC, images, secrets) + verified promotion paths | CI/CD |

| Inference Endpoints / LLM APIs | Unbounded access, weak authn/z, overexposed network paths | Strong identity controls + segmentation + runtime anomaly and policy enforcement | Cloud + K8s + Runtime |

| Agent Tool Execution | Overbroad API/file access and weak auditing of actions | Constrained tool permissions + runtime allow-lists + segmented reachability | Runtime + GRC |

Why SIEM Alone Isn’t Enough: AI-DR, Runtime Telemetry, And Threat Intelligence

A SIEM is necessary for aggregation and governance, but it is not sufficient for AI workloads. The decisive signals are often runtime behaviors and policy violations-process execution, file access, unusual network paths-not only application logs. That is why AI-DR needs runtime telemetry and enforcement in the same loop.

- Runtime telemetry across Kubernetes and workloads (process, file, and network) to identify behavior that breaks expected policy boundaries.

- Detection coverage for endpoint abuse patterns and agent misuse (for example, tool calls that exceed a defined permission envelope).

- Threat intelligence context to prioritize what matters now, aligned to your environment and blast radius.

- Automated response paths that reduce time-to-containment, not just time-to-alert.

AccuKnox positions Cloud Detection and Response (CDR) plus AI-assisted remediation to reduce response time by up to 95%. The key architectural point is consistent regardless of tooling choice: monitoring must be coupled with enforcement so the same path cannot be replayed at scale.

Move Fast, But Make Zero Trust Non-Negotiable

AI delivery speed is sustainable only when identity, data, and runtime controls are automated and enforced continuously. Agentic workflows and multi-cloud AI stacks expand the attack surface, but posture plus runtime enforcement keeps failures contained and auditable.

Evaluate platforms and architectures that unify AI inventory (AI-SPM), posture (CSPM/KSPM), runtime enforcement (eBPF/LSM), and compliance evidence-so governance scales with adoption. That is the operational bar for Zero Trust AI security-not a slogan, but a system.

Frequently Asked Questions

What Is AI-SPM And Why Does It Matter For Production AI?

AI-SPM helps you discover AI assets (models, endpoints, pipelines) and continuously assess misconfigurations and policy gaps so teams can ship AI fast with controlled risk.

How Do You Apply Zero Trust To Agentic AI Systems And Non-Human Actors?

Treat agents and workloads as identities with least privilege, constrain tool/API access, and continuously verify behavior at runtime-then segment what they can reach to reduce blast radius.

Is CSPM/KSPM Enough To Secure AI Workloads Running On Kubernetes?

CSPM/KSPM harden posture, but AI workloads still need runtime security and microsegmentation to prevent unexpected execution, credential abuse, and lateral movement in-cluster.

Can Zero Trust Runtime Enforcement Work In Air-Gapped AI Environments?

Yes-AccuKnox supports isolated deployments, and runtime enforcement continues even if the control plane is unavailable.

What’s A Realistic Timeline To Prove Value With A PoC For Zero Trust AI Security?

Typical PoCs run ~1-2 weeks for SaaS and ~2-3 weeks for on-prem, depending on prerequisites and environment readiness.

If you want to validate this blueprint against your AI stack, Request a demo to see how a unified approach can reduce operational drag.

Get a LIVE Tour

Ready For A Personalized Security Assessment?

“Choosing AccuKnox was driven by opensource KubeArmor’s novel use of eBPF and LSM technologies, delivering runtime security”

Golan Ben-Oni

Chief Information Officer

“At Prudent, we advocate for a comprehensive end-to-end methodology in application and cloud security. AccuKnox excelled in all areas in our in depth evaluation.”

Manoj Kern

CIO

“Tible is committed to delivering comprehensive security, compliance, and governance for all of its stakeholders.”

Merijn Boom

Managing Director