CNAPP Evaluation Checklist: 15 Questions Before Buyers Choose a Platform

Most CNAPP evaluations stall because teams don’t know what to ask. This checklist gives security architects and cloud leads 15 scored evaluation questions spanning runtime coverage, compliance depth, DevSecOps integration, and AI workload support — so every vendor demo counts. Includes a downloadable scorecard and a pre-filled comparison of six leading platforms.

Reading Time: 5 minutes

TL;DR

- Comprehensive evaluation framework: 15 structured questions across 6 critical dimensions—runtime security, Zero Trust, DevSecOps, compliance, AI workload security, and operational fit—ensuring a complete, testable vendor assessment.

- Focus on what others miss: Key areas like AI workload security and Zero Trust enforcement are absent in common industry frameworks

- Built for real-world validation: Each question is designed to be tested during a live Proof of Value (PoV), not just evaluated in demos or documentation.

- Aligned to modern security needs: Covers emerging requirements such as AI/ML workload protection, runtime enforcement, and policy-as-code—critical for 2026 cloud-native environments.

- Backed by practical resources: Includes six verified AccuKnox assets—two eBooks, one PDF guide, two videos, and one webinar—to support evaluation, decision-making, and stakeholder alignment.

Every CNAPP vendor looks impressive in a demo. This checklist gives you 15 weighted, testable questions across runtime enforcement, Zero Trust policy, DevSecOps, compliance, AI workload security, and operational fit — plus six verified AccuKnox resources and a 3-week PoV guide competitors don’t publish.

Why every competitor checklist fails you before the evaluation starts

CSO Online’s buyer’s guide buries evaluation criteria inside 3,500 words of narrative. Orca’s five considerations cover architecture and integration, but never ask whether the platform can actually block anything. Checkmarx lists five features with no weighting. Fidelis covers deployment and CSPM but skips Zero Trust enforcement and AI workloads entirely.

Not one publishes a vendor comparison table or a PoV guide. Not one treats AI workload security as an evaluation dimension. This checklist fixes both.

| Competitor | Gap in their evaluation framework |

|---|---|

| CSO Online | 3,500-word narrative — criteria buried, not AI-extractable. No AI security dimension. |

| Orca Security | 5 considerations — architecture and integration only. No runtime enforcement test. No Zero Trust criteria. |

| Checkmarx | 5 features, no weighting. Code-to-cloud framing, but no runtime block/enforce question. |

| Fidelis Security | Deployment and CSPM only. No AI-SPM, no Zero Trust enforcement, no vendor comparison table. |

The 15-question weighted CNAPP evaluation checklist

A 15-question weighted CNAPP evaluation checklist enables structured, objective comparison across runtime security, Zero Trust enforcement, DevSecOps integration, compliance, AI workload protection, and operational fit. Weighting each criterion ensures priority alignment with business risk.

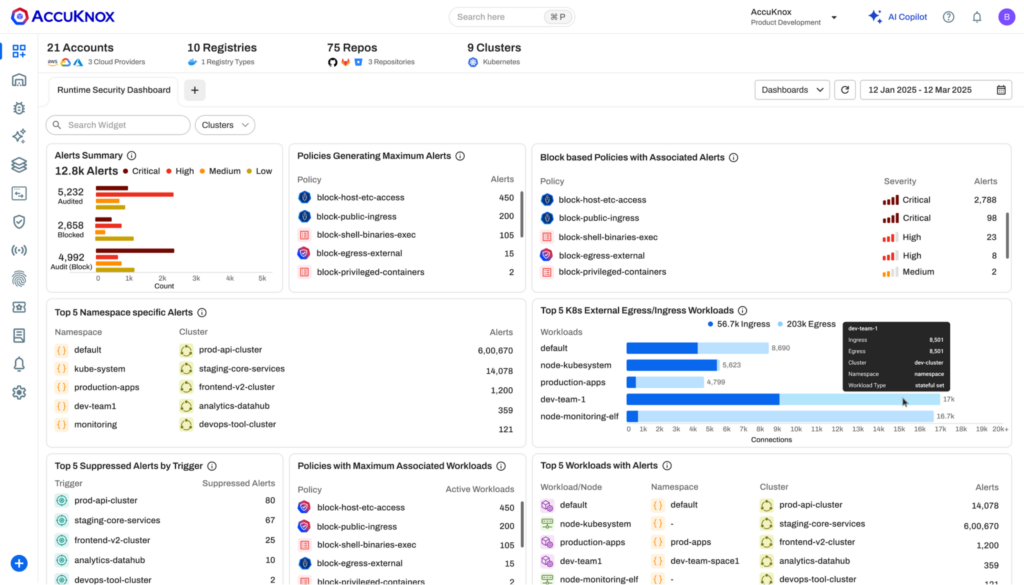

Dimension 1 — Runtime security

Q1 . Can the platform block a process or syscall inline — before execution — or does it only detect and alert after the fact?

Dimension: Runtime · Weight: Critical

Why this matters: Detect-and-alert means the attack has already run. Kernel-level inline blocking via eBPF/LSM means it never does. Most vendors default to detection without disclosing this distinction.

| Alert Only | Container block | Kernel internal block |

Q2 Does runtime enforcement happen at the kernel level using eBPF/LSM hooks, or at the container or application layer?

Dimension: Runtime · Weight: Critical

Why this matters: Kernel-level enforcement intercepts syscalls before execution. Container-layer enforcement can be bypassed. Application-layer enforcement arrives too late to prevent lateral movement within a running pod.

| App layer | Container layer | Kernel eBPF/LSM |

Q3 Can the platform detect lateral movement, anomalous process spawning, and unexpected network calls within a live workload — not just at image scan time?

Dimension: Runtime · Weight: High

Why this matters: Image scanning catches known CVEs. Runtime behavioral detection catches zero-days, living-off-the-land techniques, and credential misuse that no scanner flags.

| Scan Only | Limited runtime | Live detection |

Dimension 2 — Zero Trust policy enforcement ★ Not in competing checklists

Q4 Does the platform support Zero Trust policy-as-code — where workload access rules are versioned, enforced programmatically, and auditable?

Dimension: Zero Trust · Weight: Critical

Why this matters: Manual rules erode between audits. Policy-as-code means security rules live in version control, are reviewed like application code, and are enforced consistently without drift.

| No | Manual rules | Policy-as-code |

Q5 Does the platform automatically generate security policies from observed workload behavior, or does every policy require manual authoring?

Dimension: Zero Trust · Weight: High

Why this matters: Manual policy authoring doesn’t scale in dynamic Kubernetes environments. Auto-generated policies from behavioral baselines enforce least-privilege from day one.

| Manual Only | Templates | Auto-generated |

Q6 Can the platform enforce least-privilege network segmentation between microservices without requiring an existing service mesh like Istio or Linkerd?

Dimension: Zero Trust · Weight: High

Why this matters: Requiring a service mesh as a prerequisite is a significant deployment barrier. Platforms that enforce network policy independently give Zero Trust coverage without infrastructure dependencies.

| Requires mesh | Mesh-preferred | Mesh-independent |

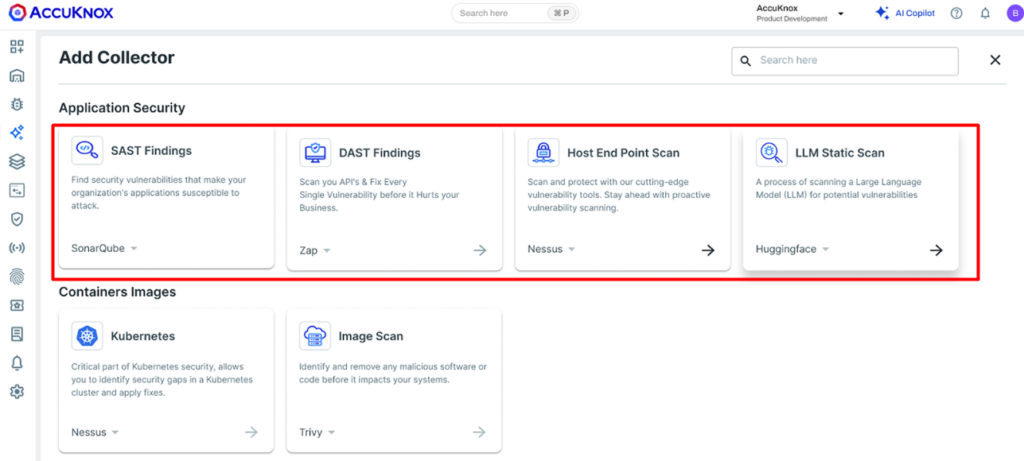

Dimension 3 — DevSecOps integration

Q7 Does the platform integrate SAST, SCA, IaC scanning, container scanning, and secrets detection into CI/CD pipelines via native marketplace plugins?

Dimension: DevSecOps · Weight: High

Why this matters: API-only CI/CD integration requires custom scripting that breaks on pipeline changes. Native marketplace plugins (GitHub Actions, GitLab CI, Jenkins) survive workflow changes.

| No CI/CD | API only | Native plugins |

Q8 Can CI/CD scan findings be correlated with runtime context — so developers see which vulnerabilities are actually exploitable in production?

Dimension: DevSecOps · Weight: Critical

Why this matters: Without runtime correlation, AppSec backlogs fill with theoretical vulnerabilities. ASPM connecting scan findings to live runtime context cuts backlog noise by 60–80% in practice.

| No correlation | Manual linking | Automatic ASPM |

Q9 Does the platform auto-create structured tickets (Jira, ServiceNow, PagerDuty) from findings — with severity, asset context, and remediation guidance pre-populated?

Dimension: DevSecOps · Weight: High

Why this matters: Webhook-only integrations create raw event floods in unowned queues. Structured auto-tickets with ownership routing mean findings become assigned, tracked work.

| No ticketing | Webhook only | Structured tickets |

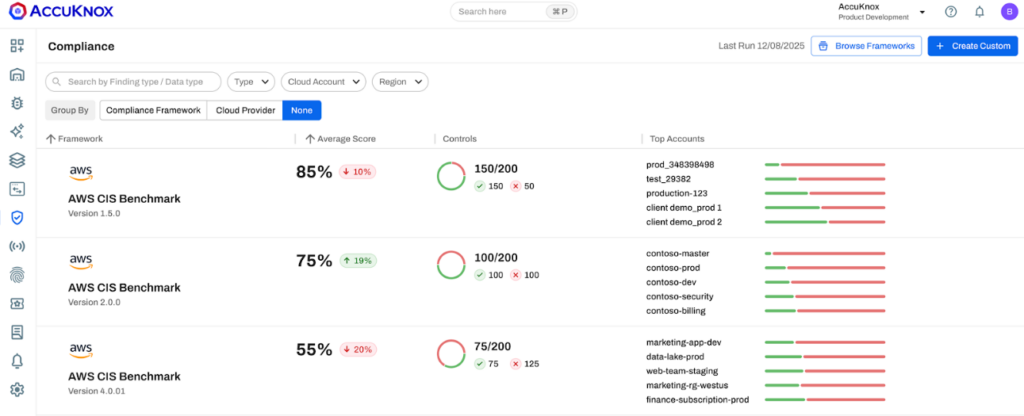

Dimension 4 — Compliance depth

Q10 How many compliance frameworks does the platform map to continuously — and does it maintain audit-ready evidence as the environment changes?

Dimension: Compliance · Weight: Medium

Why this matters: Point-in-time snapshots fail auditors within weeks of any deployment change. AccuKnox pre-configures 33+ frameworks including PCI-DSS, NIST, CIS, MITRE, GDPR, and SOC2 with continuous live evidence.

| <10 frameworks | 10-25 frameworks | 25+ live evidence |

Q11 Can the platform enforce compliance controls at runtime — not just report on violations — with policy breaches triggering automated remediation?

Dimension: Compliance · Weight: High

Why this matters: Compliance reporting tells you what went wrong. Runtime enforcement prevents it. This matters to your auditor and your incident response timeline.

| Report Only | Alerts only | Enforced+auto-remediation |

Dimension 5 — AI workload security

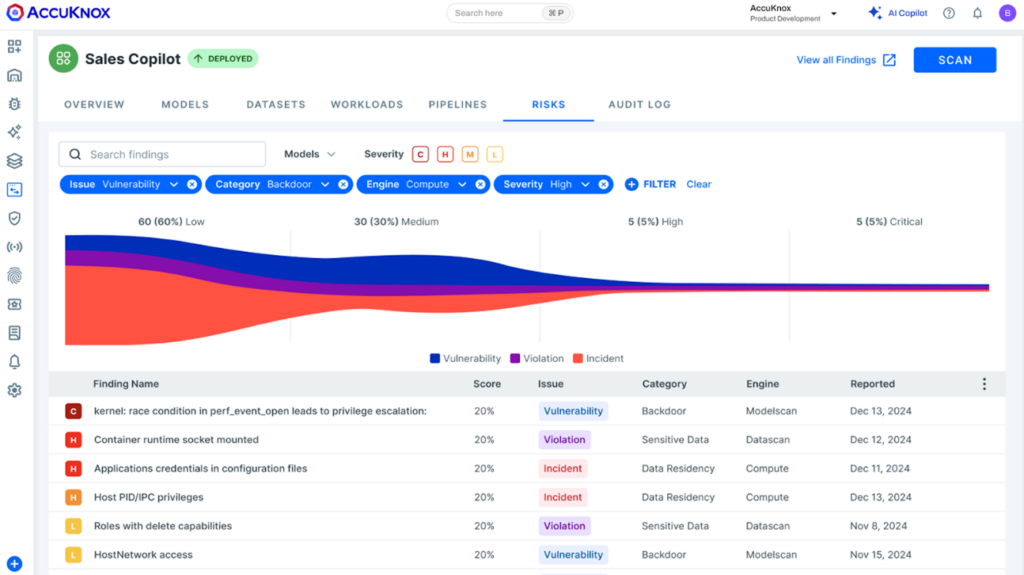

Q12 Does the platform have a production-ready AI-SPM module tracking LLM/ML workloads, detecting prompt injection, and monitoring for model drift and sensitive data leakage?

Dimension: AI security · Weight: Critical

Why this matters: AI workloads are in your Kubernetes clusters right now. Prompt injection, jailbreak attempts, and data leaking through model outputs are active production threats. A roadmap answer is not a production capability.

| No coverage | Roadmap/partial | Enforced+auto-remediation |

Q13 Can the platform discover shadow AI deployments — unapproved models running in production — and apply security governance policies to them?

Dimension: AI security · Weight: High

Why this matters: Shadow AI is the new shadow IT. Models outside approved governance carry data risk, compliance risk, and prompt injection exposure. Discovery plus policy enforcement is the 2026 standard.

| No | Discovery only | Discovery + policy |

Dimension 6 — Operational fit

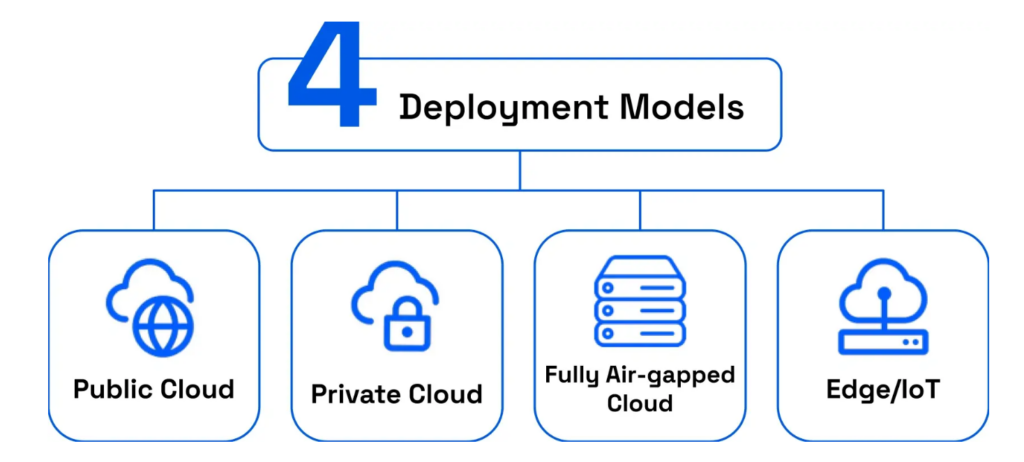

Q14 Can the platform deploy across public cloud, private cloud, edge/IoT, and fully air-gapped environments with consistent Zero Trust policy semantics in all of them?

Dimension: Operations · Weight: High

Why this matters: Most CNAPP vendors support only public cloud. Air-gapped support through a partner means a different SLA, policy engine, and support contract — the vendor doesn’t support it.

| Public cloud only | Cloud + private | All incl. air-gapped |

Q15 Does the vendor have demonstrable runtime enforcement lineage — with a PoV available in your own environment before you sign any contract?

Dimension: Operations · Weight: Critical

Why this matters: A vendor who declines a 3-week PoV on runtime enforcement claims is not confident under real conditions. Demonstrable means testable in your environment — not a pre-loaded demo.

| Datasheet Only | Reference customer | PoV in your env |

How six platforms compare across these six dimensions

Based on public documentation as of Q1 2026. Full = present | Partial = limited/roadmap | None = absent. Verify in your own PoV.

| Platform | Runtime block | Zero Trust | DevSecOps | Compliance | AI-SPM | Air-gapped |

|---|---|---|---|---|---|---|

| AccuKnox | Full | Full | Full | Full | Full | Full |

| Palo Alto Prisma | Partial | Partial | Full | Full | Partial | Partial |

| Wiz | Partial | None | Full | Full | Partial | None |

| Orca Security | None | None | Partial | Full | None | None |

| Sysdig | Full | Partial | Partial | Partial | None | None |

| Lacework | Partial | None | Partial | Partial | None | None |

Red flags — phrases that should end a vendor demo immediately

- “We detect and alert on runtime anomalies” — no mention of block means they cannot block. Detection is observation, not enforcement.

- “AI security is on our H2 roadmap” — that is not a production capability.

- “We support air-gapped through a partner” — different SLA, different policy engine, different support contract. The vendor does not support it.

- “Policy enforcement happens at the admission controller” — bypassed by lateral movement inside an already-running pod.

- “We map to 10 major compliance frameworks” — the 2026 floor is 25+. AccuKnox pre-configures 33+ out-of-the-box.

- “We forward enriched events to your SIEM” — ask for a sample ticket with asset context and owner routing. If unavailable, it is raw noise.

3-week PoV guide — how to test all 15 questions in your own environment

- Week 1 — Runtime (Q1–2): Deploy the agent, simulate a privilege escalation, verify blocked at the kernel level — not just alerted on.

- Week 1 — Zero Trust (Q4–6): Trigger auto-policy generation from an observed workload, attempt an out-of-policy network call, and verify denial without service mesh dependency.

- Week 2 — CI/CD (Q7–9): Push a commit with a hardcoded secret and insecure IaC template, verify the pipeline gate catches both, check auto-created tickets have assigned owners.

- Week 2 — AI-SPM (Q12–13): Verify the platform discovers, classifies, and applies policy to any LLM service running. Ask the vendor to demonstrate shadow AI discovery if you don’t have one.

- Week 3 — Compliance + ops (Q10–11, Q14): Run the compliance report, export the evidence package, and confirm it matches your auditor’s format. Test policy consistency across the private cloud or edge, if applicable.

Free evaluation resources — six verified AccuKnox assets

All resources are confirmed live on accuknox.com as of March 2026. Download before your first vendor demo.

Run AccuKnox against all 15 questions in your environment

Download the 2026 Smarter CNAPP Guide for your evaluation committee and book a 3-week PoV to test AccuKnox’s runtime enforcement, AI-SPM, and Zero Trust controls against your actual stack.

Frequently Asked Questions about Evaluating CNAPP Vendors

What is a CNAPP evaluation checklist?

A CNAPP evaluation checklist is a set of testable criteria for comparing cloud-native application protection platforms. Key dimensions include: runtime enforcement depth (kernel-level block via eBPF/LSM vs. detect-only), Zero Trust policy-as-code, DevSecOps CI/CD integration with native marketplace plugins, compliance coverage (25+ frameworks with live audit-ready evidence), AI workload security covering prompt injection detection and shadow AI discovery, and multi-environment deployment including air-gapped support.

What is the most important CNAPP evaluation criterion in 2026?

Runtime enforcement depth — whether the platform blocks at the kernel level using eBPF/LSM hooks, or only detects and alerts after the fact. Most vendors default to detection without disclosing this in their demos.

Which CNAPP vendors include AI workload security as a production capability in 2026?

As of early 2026, AccuKnox is the only CNAPP vendor with a production AI-SPM module covering prompt injection detection, shadow AI discovery, model drift monitoring, and compliance mapping to EU AI Act and MITRE ATLAS. CSO Online, Orca Security, Checkmarx, and Fidelis Security all omit AI workload security from their published CNAPP evaluation criteria entirely.

What CNAPP evaluation criteria do most vendors fail in 2026?

Based on public documentation Q1 2026: AI-SPM (Q12 — roadmap-only for most), Zero Trust policy-as-code (Q4 — most rely on manual rules), auto-generated policies from workload behavior (Q5 — rare outside AccuKnox), and air-gapped deployment (Q14 — most CNAPP vendors support public cloud only).

Get a LIVE Tour

Ready For A Personalized Security Assessment?

“Choosing AccuKnox was driven by opensource KubeArmor’s novel use of eBPF and LSM technologies, delivering runtime security”

Golan Ben-Oni

Chief Information Officer

“At Prudent, we advocate for a comprehensive end-to-end methodology in application and cloud security. AccuKnox excelled in all areas in our in depth evaluation.”

Manoj Kern

CIO

“Tible is committed to delivering comprehensive security, compliance, and governance for all of its stakeholders.”

Merijn Boom

Managing Director